History of Deep Learning

These days, you hear a lot about machine learning (or ML) and artificial intelligence (or AI) – both good or bad depending on your source.

Many of us immediately conjure up images of HAL from 2001: A Space Odyssey, the Terminator cyborgs, C-3PO, Data from Star Trek, or Samantha from Her when the subject turns to AI. And many may not even be familiar with machine learning as a separate subject.

The phrases are often tossed around interchangeably, but they’re not exactly the same thing. In the most general sense, machine learning has evolved from AI.

In the Google Trends graph above, you can see that since around 2022 we have huge increase in searching for what an AI is.

But if you’re still thinking robots and killer cyborgs sent from the future, you’re doing it a disservice. Both AI and machine learning have a lot more going on than “just” the fate of mankind.

Artificial intelligence can be considered the all-encompassing umbrella. It refers to computer programs being able to “think,” behave, and do things as a human being might do them. It’s usually classified as either general or applied/narrow (specific to a single area or action).

Machine learning goes beyond that. It involves providing machines with the data they need to “learn” how to do something without being explicitly programmed to do it. An algorithm such as decision tree learning, inductive logic programming, clustering, reinforcement learning, or Bayesian networks helps them make sense of the inputted data.

Machine learning was a giant step forward for AI. Forward…but not all the way to the finish line.

The development of neural networks – a computer system set up to classify and organize data much like the human brain – has advanced things even further.

Based on this categorization and analysis, a machine learning system can make an educated “guess” based on the greatest probability, and many are even able to learn from their mistakes, making them “smarter” as they go along.

Still with me? Good, because I’m about to introduce the next development under the AI umbrella.

Enter deep learning.

Deep Learning

If machine learning is a subfield of artificial intelligence, then deep learning could be called a subfield of machine learning. The evolution of the subject has gone artificial intelligence > machine learning > deep learning.

The expression “deep learning” was first used when talking about Artificial Neural Networks (ANNs) by Igor Aizenberg and colleagues in or around 2000.

Since then, the term has really started to take over the AI conversation, despite the fact that there are other branches of study taking place, like natural language processing, or NLP.

In a nutshell, deep learning is a way to achieve machine learning. As ANNs became more powerful and complex – and literally deeper with many layers and neurons – the ability for deep learning to facilitate robust machine learning and produce AI increased.

Deep Learning uses what’s called “supervised” learning – where the neural network is trained using labeled data – or “unsupervised” learning – where the network uses unlabeled data and looks for recurring patterns.

The neurons at each level make their “guesses” and most-probable predictions, and then pass on that info to the next level, all the way to the eventual outcome.

Confused? You’re not alone. It’s a perplexing topic.

It’s the most exciting development in the world of artificial intelligence right now. But instead of trying to grasp the intricacies of the field – which could be an ongoing and extensive series of articles unto itself – let’s just take a look at some of the major developments in the history of machine learning (and by extension, deep learning and AI). It’s come a long way in relatively little time.

1943 – The first mathematical model of a neural network

Walter Pitts and Warren McCulloch

Obviously, for machine and deep learning to work, we needed an established understanding of the neural networks of the human brain.

Walter Pitts, a logician, and Warren McCulloch, a neuroscientist, gave us that piece of the puzzle in 1943 when they created the first mathematical model of a neural network. Published in their seminal work “A Logical Calculus of Ideas Immanent in Nervous Activity”, they proposed a combination of mathematics and algorithms that aimed to mimic human thought processes.

Their model – typically called McCulloch-Pitts neurons – is still the standard today (although it has evolved over the years).

1950 – The prediction of machine learning

Alan Turing

Turing, a British mathematician, is perhaps most well-known for his involvement in code-breaking during World War II.

But his contributions to mathematics and science don’t stop there. In 1947, he predicted the development of machine learning, even going so far as to describe the impact it could have on jobs.

In 1950, Turing proposed just such a machine, even hinting at genetic algorithms, in his paper “Computing Machinery and Intelligence.” In it, he crafted what has been dubbed The Turing Test – although he himself called it The Imitation Game – to determine whether a computer can “think.”

At its simplest, the test requires a machine to carry on a conversation via text with a human being. If after five minutes the human is convinced that they’re talking to another human, the machine is said to have passed.

It would take 60 years for any machine to do so, although many still debate the validity of the results.

1952 – First machine learning programs

Arthur Samuel

Arthur Samuel invented machine learning and coined the phrase “machine learning” in 1952. He is revered as the father of machine learning. Upon joining the Poughkeepsie Laboratory at IBM, Arthur Samuel would go on to create the first computer learning programs. The programs were built to play the game of checkers. Arthur Samuel’s program was unique in that each time checkers was played, the computer would always get better, correcting its mistakes and finding better ways to win from that data. This automatic learning would be one of the first examples of machine learning.

1957 – Setting the foundation for deep neural networks

Frank Rosenblatt

Rosenblatt, a psychologist, submitted a paper entitled “The Perceptron: A Perceiving and Recognizing Automaton” to Cornell Aeronautical Laboratory in 1957.

He declared he would “construct an electronic or electromechanical system which would learn to recognize similarities or identities between patterns of optical, electrical, or tonal information, in a manner which may be closely analogous to the perceptual processes of a biological brain.” Whew.

His idea was more hardware than software or algorithm, but it did plant the seeds of bottom-up learning, and is widely recognized as the foundation of deep neural networks (DNN).

1959 – Discovery of simple cells and complex cells

David H. Hubel and Torsten Wiesel

In 1959, neurophysiologists and Nobel Laureates David H. Hubel and Torsten Wiesel discovered two types of cells in the primary visual cortex: simple cells and complex cells.

Many artificial neural networks (ANNs) are inspired by these biological observations in one way or another. While not a milestone for deep learning specifically, it was definitely one that heavily influenced the field.

1960 – Control theory

Henry J. Kelley

Kelley was a professor of aerospace and ocean engineering at the Virginia Polytechnic Institute.

In 1960, he published “Gradient Theory of Optimal Flight Paths,” itself a major and widely recognized paper in his field. Many of his ideas about control theory – the behavior of systems with inputs, and how that behavior is modified by feedback – have been applied directly to AI and ANNs over the years.

They were used to develop the basics of a continuous backpropagation model (aka the backward propagation of errors) used in training neural networks.

1965 – The first working deep learning networks

Alexey Ivakhnenko and V.G. Lapa

Mathematician Ivakhnenko and associates including Lapa arguably created the first working deep learning networks in 1965, applying what had been only theories and ideas up to that point.

Ivakhnenko developed the Group Method of Data Handling (GMDH) – defined as a “family of inductive algorithms for computer-based mathematical modeling of multi-parametric datasets that features fully automatic structural and parametric optimization of models” – and applied it to neural networks.

For that reason alone, many consider Ivakhnenko the father of modern deep learning.

His learning algorithms used deep feedforward multilayer perceptrons using statistical methods at each layer to find the best features and forward them through the system.

Using GMDH, Ivakhnenko was able to create an 8-layer deep network in 1971, and he successfully demonstrated the learning process in a computer identification system called Alpha.

1979-80 – An ANN learns how to recognize visual patterns

Kunihiko Fukushima

A recognized innovator in neural networks, Fukushima is perhaps best known for the creation of Neocognitron, an artificial neural network that learned how to recognize visual patterns. It has been used for handwritten character and other pattern recognition tasks, recommender systems, and even natural language processing.

His work – which was heavily influenced by Hubel and Wiesel – led to the development of the first convolutional neural networks, which are based on the visual cortex organization found in animals. They are variations of multilayer perceptrons designed to use minimal amounts of preprocessing.

1982 – The creation of the Hopfield Networks

John Hopfield

In 1982, Hopfield created and popularized the system that now bears his name.

Hopfield Networks are a recurrent neural network that serve as a content-addressable memory system, and they remain a popular implementation tool for deep learning in the 21st century.

1985 – A program learns to pronounce English words

Terry Sejnowski

Computational neuroscientist Terry Sejnowski used his understanding of the learning process to create NETtalk in 1985.

The program learned how to pronounce English words in much the same way a child does, and was able to improve over time while converting text to speech.

1986 – Improvements in shape recognition and word prediction

David Rumelhart, Geoffrey Hinton, and Ronald J. Williams

In a 1986 paper entitled “Learning Representations by Back-propagating Errors,” Rumelhart, Hinton, and Williams described in greater detail the process of backpropagation.

They showed how it could vastly improve the existing neural networks for many tasks such as shape recognition, word prediction, and more.

Despite some setbacks after that initial success, Hinton kept at his research during the Second AI Winter to reach new levels of success and acclaim. He is considered by many in the field to be the godfather of deep learning.

1989 – Machines read handwritten digits

Yann LeCun

LeCun – another rock star in the AI and DL universe – combined convolutional neural networks (which he was instrumental in developing) with recent backpropagation theories to read handwritten digits in 1989.

His system was eventually used to read handwritten checks and zip codes by NCR and other companies, processing anywhere from 10-20% of cashed checks in the United States in the late 90s and early 2000s.

1989 – Q-learning

Christopher Watkins

Watkins published his PhD thesis – “Learning from Delayed Rewards” – in 1989. In it, he introduced the concept of Q-learning, which greatly improves the practicality and feasibility of reinforcement learning in machines.

This new algorithm suggested it was possible to learn optimal control directly without modelling the transition probabilities or expected rewards of the Markov Decision Process.

1993 – A ‘very deep learning’ task is solved

Jürgen Schmidhuber

German computer scientist Schmidhuber solved a “very deep learning” task in 1993 that required more than 1,000 layers in the recurrent neural network.

It was a huge leap forward in the complexity and ability of neural networks.

1995 – Support vector machines

Corinna Cortes and Vladimir Vapnik

Support vector machines – or SVMs – have been around since the 1960s, tweaked and refined by many over the decades.

The current standard model was designed by Cortes and Vapnik in 1993 and presented in 1995.

A SVM is basically a system for recognizing and mapping similar data, and can be used in text categorization, handwritten character recognition, and image classification as it relates to machine learning and deep learning.

1997 – Long short-term memory was proposed

Jürgen Schmidhuber and Sepp Hochreiter

A recurrent neural network framework, long short-term memory (LSTM) was proposed by Schmidhuber and Hochreiter in 1997.

They improve both the efficiency and practicality of recurrent neural networks by eliminating the long-term dependency problem (when necessary information is located too far “back” in the RNN and gets “lost”). LSTM networks can “remember” that information for a longer period of time.

Refined over time, LSTM networks are widely used in DL circles, and Google recently implemented it into its speech-recognition software for Android-powered smartphones.

1998 – Gradient-based learning

Yann LeCun

LeCun was instrumental in yet another advancement in the field of deep learning when he published his “Gradient-Based Learning Applied to Document Recognition” paper in 1998.

The Stochastic gradient descent algorithm (aka gradient-based learning) combined with the backpropagation algorithm is the preferred and increasingly successful approach to deep learning.

2009 – Launch of ImageNet

Fei-Fei Li

A professor and head of the Artificial Intelligence Lab at Stanford University, Fei-Fei Li launched ImageNet in 2009.

As of 2017, it’s a very large and free database of more than 14 million (14,197,122 at last count) labeled images available to researchers, educators, and students.

Labeled data – such as these images – are needed to “train” neural nets in supervised learning.

Images are labeled and organized according to Wordnet, a lexical database of English words – nouns, verbs, adverbs, and adjectives – sorted by groups of synonyms called synsets.

2011 – Creation of AlexNet

Alex Krizhevsky

Between 2011 and 2012, Alex Krizhevsky won several international machine and deep learning competitions with his creation AlexNet, a convolutional neural network.

AlexNet built off and improved upon LeNet5 (built by Yann LeCun years earlier). It initially contained only eight layers – five convolutional followed by three fully connected layers – and strengthened the speed and dropout using rectified linear units.

Its success kicked off a convolutional neural network renaissance in the deep learning community.

2012 – The Cat Experiment

It may sound cute and insignificant, but the so-called “Cat Experiment” was a major step forward.

Using a neural network spread over thousands of computers, the team presented 10,000,000 unlabeled images – randomly taken from YouTube – to the system and allowed it to run analyses on the data.

When this unsupervised learning session was complete, the program had taught itself to identify and recognize cats, performing nearly 70% better than previous attempts at unsupervised learning.

It wasn’t perfect, though. The network recognized only about 15% of the presented objects. That said, it was yet another baby step towards genuine AI.

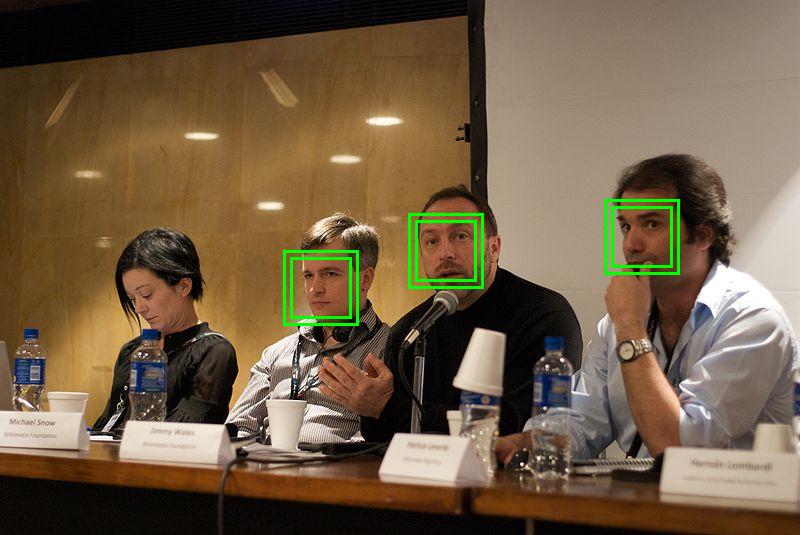

2014 – DeepFace

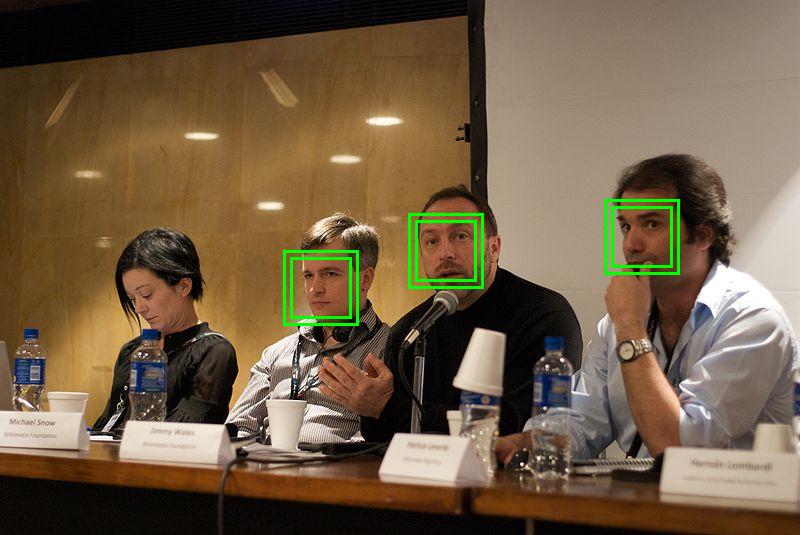

Monster platforms are often the first thinking outside the box, and none is bigger than Facebook.

Developed and released to the world in 2014, the social media behemoth’s deep learning system – nicknamed DeepFace – uses neural networks to identify faces with 97.35% accuracy. That’s an improvement of 27% over previous efforts, and a figure that rivals that of humans (which is reported to be 97.5%).

Google Photos uses a similar program.

2014 – Generative Adversarial Networks (GAN)

Introduced in 2014 by a team of researchers lead by Ian Goodfellow, an authority no less than Yann LeCun himself had this to say about GANs:

They’re kind of a big deal.

Generative adversarial networks enable models to tackle unsupervised learning, which is more or less the end goal in the artificial intelligence community.

Essentially, a GAN uses two competing networks: the first takes in data and attempts to create indistinguishable samples, while the second receives both the data and created samples, and must determine if each data point is genuine or generated.

Learning simultaneously, the networks compete against one another and push each other to get “smarter” faster.

2016 - Powerful machine learning products

Cray Inc., as well as many other businesses like it, are now able to offer powerful machine and deep learning products and solutions.

Using Microsoft’s neural-network software on its XC50 supercomputers with 1,000 Nvidia Tesla P100 graphic processing units, they can perform deep learning tasks on data in a fraction of the time they used to take – hours instead of days

2017 - The Transformer Revolution

Google’s paper “Attention Is All You Need” introduced the Transformer architecture, replacing recurrent networks with self-attention mechanisms.

This innovation became the foundation for nearly all modern language models.

It also spurred a paradigm shift from narrow, task-specific neural nets toward large, generalizable architectures capable of scaling with data and compute.

2018 - Pretraining and Transfer Learning Go Mainstream

The arrival of BERT (Bidirectional Encoder Representations from Transformers) by Google changed NLP forever.

Rather than training from scratch, researchers began pretraining massive models on large text corpora, then fine-tuning them for specific tasks.

This “transfer learning” strategy drastically reduced data requirements and opened the door to general-purpose language understanding.

Meanwhile, models like GPT-2 and XLNet expanded the frontier of unsupervised text generation.

2019 - Scaling Up: Bigger Models, Bigger Compute

Deep learning entered the era of scale.

Frameworks such as TensorFlow 2.0 and PyTorch 1.x standardized APIs for large-model training, while specialized hardware (GPUs, TPUs, and cloud clusters) became widespread.

Parameter counts soared from millions to billions, sparking the “bigger is better” philosophy in research.

At the same time, attention turned to compute efficiency and environmental impact as training costs exploded.

2020 - AI for Science: AlphaFold and the Pandemic Push

AlphaFold 2 from DeepMind solved the decades-old protein-folding problem, proving deep learning’s power in scientific discovery.

The COVID-19 pandemic accelerated AI use in healthcare - diagnostics, molecular modeling, and medical imaging.

Remote-work tools and automation heavily integrated ML for productivity, speech recognition, and translation.

Deep learning became essential infrastructure rather than an experimental technology.

2021 - The Dawn of Generative AI

A new wave of creativity began.

DALL-E, CLIP, and Diffusion Models introduced text-to-image generation, while GPT-3 popularized large-scale text generation for chatbots, writing, and coding assistance.

The line between creative and analytical AI blurred.

“Multimodality” - systems that process both language and images emerged as the next frontier.

This year marked the cultural turning point where AI started to generate, not just recognize.

2022 - The Generative Boom and Foundation Models

The term “foundation models” entered mainstream AI vocabulary, describing gigantic pre-trained architectures like GPT-3, PaLM, and Stable Diffusion.

These models could be adapted to thousands of downstream tasks, ushering in a general-purpose era.

Diffusion models achieved photorealistic image synthesis, open-source AI communities thrived, and global excitement over generative AI soared.

Industry and academia began grappling with ethics, safety, and bias in large-scale AI.

2023 - The Chatbot Revolution and Everyday AI

Generative AI became consumer-facing.

ChatGPT, Claude, and Bard (later Gemini) introduced natural conversation with LLMs to the public.

Businesses rapidly adopted generative AI for writing, coding, data analysis, and design.

The open-source movement gained speed (e.g., LLaMA, Mistral), democratizing access to frontier models.

AI governance, hallucination control, and data transparency became central research themes.

2024 - Multimodality and Long-Context Intelligence

Deep learning models expanded beyond text.

New architectures like GPT-4o, Gemini 1.5, and Claude 3 Opus integrated text, images, audio, and video seamlessly.

Long-context models could handle documents with millions of tokens, enabling reasoning across books, datasets, and multimodal interactions.

AI became embedded in productivity suites, operating systems, and creative tools - shifting from novelty to ubiquity. With AI tools it becomes easier to track the market no matter if this is in retail or hospitality.

2025 - Efficiency, Alignment, and the Maturity of Deep Learning

By 2025, deep learning has entered its maturity phase.

Focus shifts from scaling size to scaling efficiency - through techniques like sparse attention, quantization, retrieval-augmented generation, and modular training.

Research emphasizes alignment, interpretability, and human-AI collaboration, not just raw capability.

AI systems act as copilots in science, engineering, and creativity. Data scraping becomes easier with tools like import.io.

As Ekundayo et al. (2025) describe, the field now stands at a “post-growth” moment: deep learning is no longer emerging - it’s everywhere.

Fun and Games

It may not be saving the world, but any history of machine learning and deep learning would be remiss if it didn’t mention some of the key achievements over the years as they relate to games and competing against human beings:

- 1992: Gerald Tesauro develops TD-Gammon, a computer program that used an artificial neural network to learn how to play backgammon.

- 1997: Deep Blue – designed by IBM – beat chess grandmaster Garry Kasparov in a six-game series.

- 2011: Watson – a question answering system developed by IBM – competed on Jeopardy! against Ken Jennings and Brad Rutter. Using a combination of machine learning, natural language processing, and information retrieval techniques, Watson was able to win the competition over the course of three matches

- 2016: Google’s AlphaGo program beat Lee Sedol of Korea, a top-ranked international Go player. Developed by DeepMind, AlphaGo uses machine learning and tree search techniques. The program is scheduled to face off against current #1 ranked player Ke Jie of China in May 2017.

- 2017: AlphaGo Zero learns entirely from self-play, surpassing all previous Go programs. AlphaZero soon follows, mastering chess and shogi without human input.

- 2018 - 2019: OpenAI Five achieves professional-level play in Dota 2, while AlphaStar reaches Grandmaster rank in StarCraft II, proving deep learning’s power in complex real-time strategy.

- 2020: MuZero learns to play chess, shogi, and Atari without being told the rules, showing true model-based learning.

- 2021 – 2022: DeepMind’s Player of Games and Meta’s CICERO blend strategy, negotiation, and natural language, mastering both poker and Diplomacy.

- 2023 – 2025: AI becomes a creative collaborator. Game studios use large models to generate dialogue, levels, and adaptive NPCs that learn from players — shifting the focus from competition to co-creation.

A Rough Timeline

There have been a lot of developments and advancements in the AI, ML, and DL fields over the past 60 years. To discuss every one of them would fill a book, let alone a blog post.

To boil it down to a rough timeline, deep learning might look something like this:

- 1960s: Shallow neural networks

- 1960-70s: Backpropagation emerges

- 1974-80: First AI Winter

- 1980s: Convolution emerges

- 1987-93: Second AI Winter

- 1990s: Unsupervised deep learning

- 1990s-2000s: Supervised deep learning back en vogue

- 2006-201s: Modern deep learning

- 2016-2025: Large-scale deep learning, foundation models, multimodality, generative AI, deployment & impact

Today, deep learning is present in our lives in ways we may not even consider: Google’s voice and image recognition, Netflix and Amazon’s recommendation engines, Apple’s Siri, automatic email and text replies, chatbots, and more. With data all around us, there’s more information for these programs to analyze and improve upon.

It’s all around you. Where will deep learning head next? Where will it take us? It’s hard to say. The field continues to evolve, and the next major breakthrough may be just around the corner, or not for years. There is no set timeline for something so complex.

One thing is for certain, though. It’s a very exciting time to be alive…to witness the blending of true intelligence and machines. Machine learning history shows us that the future is here in many ways.

Recommended Reading

Python Web Scraping: What Are The Pros and Cons

Data Mining vs. Machine Learning: What’s The Difference?

Understanding the Importance of Data: Why Data is Crucial for Business and Society

These days, you hear a lot about machine learning (or ML) and artificial intelligence (or AI) – both good or bad depending on your source.

Many of us immediately conjure up images of HAL from 2001: A Space Odyssey, the Terminator cyborgs, C-3PO, Data from Star Trek, or Samantha from Her when the subject turns to AI. And many may not even be familiar with machine learning as a separate subject.

The phrases are often tossed around interchangeably, but they’re not exactly the same thing. In the most general sense, machine learning has evolved from AI.

In the Google Trends graph above, you can see that since around 2022 we have huge increase in searching for what an AI is.

But if you’re still thinking robots and killer cyborgs sent from the future, you’re doing it a disservice. Both AI and machine learning have a lot more going on than “just” the fate of mankind.

Artificial intelligence can be considered the all-encompassing umbrella. It refers to computer programs being able to “think,” behave, and do things as a human being might do them. It’s usually classified as either general or applied/narrow (specific to a single area or action).

Machine learning goes beyond that. It involves providing machines with the data they need to “learn” how to do something without being explicitly programmed to do it. An algorithm such as decision tree learning, inductive logic programming, clustering, reinforcement learning, or Bayesian networks helps them make sense of the inputted data.

Machine learning was a giant step forward for AI. Forward…but not all the way to the finish line.

The development of neural networks – a computer system set up to classify and organize data much like the human brain – has advanced things even further.

Based on this categorization and analysis, a machine learning system can make an educated “guess” based on the greatest probability, and many are even able to learn from their mistakes, making them “smarter” as they go along.

Still with me? Good, because I’m about to introduce the next development under the AI umbrella.

Enter deep learning.

Deep Learning

If machine learning is a subfield of artificial intelligence, then deep learning could be called a subfield of machine learning. The evolution of the subject has gone artificial intelligence > machine learning > deep learning.

The expression “deep learning” was first used when talking about Artificial Neural Networks (ANNs) by Igor Aizenberg and colleagues in or around 2000.

Since then, the term has really started to take over the AI conversation, despite the fact that there are other branches of study taking place, like natural language processing, or NLP.

In a nutshell, deep learning is a way to achieve machine learning. As ANNs became more powerful and complex – and literally deeper with many layers and neurons – the ability for deep learning to facilitate robust machine learning and produce AI increased.

Deep Learning uses what’s called “supervised” learning – where the neural network is trained using labeled data – or “unsupervised” learning – where the network uses unlabeled data and looks for recurring patterns.

The neurons at each level make their “guesses” and most-probable predictions, and then pass on that info to the next level, all the way to the eventual outcome.

Confused? You’re not alone. It’s a perplexing topic.

It’s the most exciting development in the world of artificial intelligence right now. But instead of trying to grasp the intricacies of the field – which could be an ongoing and extensive series of articles unto itself – let’s just take a look at some of the major developments in the history of machine learning (and by extension, deep learning and AI). It’s come a long way in relatively little time.

1943 – The first mathematical model of a neural network

Walter Pitts and Warren McCulloch

Obviously, for machine and deep learning to work, we needed an established understanding of the neural networks of the human brain.

Walter Pitts, a logician, and Warren McCulloch, a neuroscientist, gave us that piece of the puzzle in 1943 when they created the first mathematical model of a neural network. Published in their seminal work “A Logical Calculus of Ideas Immanent in Nervous Activity”, they proposed a combination of mathematics and algorithms that aimed to mimic human thought processes.

Their model – typically called McCulloch-Pitts neurons – is still the standard today (although it has evolved over the years).

1950 – The prediction of machine learning

Alan Turing

Turing, a British mathematician, is perhaps most well-known for his involvement in code-breaking during World War II.

But his contributions to mathematics and science don’t stop there. In 1947, he predicted the development of machine learning, even going so far as to describe the impact it could have on jobs.

In 1950, Turing proposed just such a machine, even hinting at genetic algorithms, in his paper “Computing Machinery and Intelligence.” In it, he crafted what has been dubbed The Turing Test – although he himself called it The Imitation Game – to determine whether a computer can “think.”

At its simplest, the test requires a machine to carry on a conversation via text with a human being. If after five minutes the human is convinced that they’re talking to another human, the machine is said to have passed.

It would take 60 years for any machine to do so, although many still debate the validity of the results.

1952 – First machine learning programs

Arthur Samuel

Arthur Samuel invented machine learning and coined the phrase “machine learning” in 1952. He is revered as the father of machine learning. Upon joining the Poughkeepsie Laboratory at IBM, Arthur Samuel would go on to create the first computer learning programs. The programs were built to play the game of checkers. Arthur Samuel’s program was unique in that each time checkers was played, the computer would always get better, correcting its mistakes and finding better ways to win from that data. This automatic learning would be one of the first examples of machine learning.

1957 – Setting the foundation for deep neural networks

Frank Rosenblatt

Rosenblatt, a psychologist, submitted a paper entitled “The Perceptron: A Perceiving and Recognizing Automaton” to Cornell Aeronautical Laboratory in 1957.

He declared he would “construct an electronic or electromechanical system which would learn to recognize similarities or identities between patterns of optical, electrical, or tonal information, in a manner which may be closely analogous to the perceptual processes of a biological brain.” Whew.

His idea was more hardware than software or algorithm, but it did plant the seeds of bottom-up learning, and is widely recognized as the foundation of deep neural networks (DNN).

1959 – Discovery of simple cells and complex cells

David H. Hubel and Torsten Wiesel

In 1959, neurophysiologists and Nobel Laureates David H. Hubel and Torsten Wiesel discovered two types of cells in the primary visual cortex: simple cells and complex cells.

Many artificial neural networks (ANNs) are inspired by these biological observations in one way or another. While not a milestone for deep learning specifically, it was definitely one that heavily influenced the field.

1960 – Control theory

Henry J. Kelley

Kelley was a professor of aerospace and ocean engineering at the Virginia Polytechnic Institute.

In 1960, he published “Gradient Theory of Optimal Flight Paths,” itself a major and widely recognized paper in his field. Many of his ideas about control theory – the behavior of systems with inputs, and how that behavior is modified by feedback – have been applied directly to AI and ANNs over the years.

They were used to develop the basics of a continuous backpropagation model (aka the backward propagation of errors) used in training neural networks.

1965 – The first working deep learning networks

Alexey Ivakhnenko and V.G. Lapa

Mathematician Ivakhnenko and associates including Lapa arguably created the first working deep learning networks in 1965, applying what had been only theories and ideas up to that point.

Ivakhnenko developed the Group Method of Data Handling (GMDH) – defined as a “family of inductive algorithms for computer-based mathematical modeling of multi-parametric datasets that features fully automatic structural and parametric optimization of models” – and applied it to neural networks.

For that reason alone, many consider Ivakhnenko the father of modern deep learning.

His learning algorithms used deep feedforward multilayer perceptrons using statistical methods at each layer to find the best features and forward them through the system.

Using GMDH, Ivakhnenko was able to create an 8-layer deep network in 1971, and he successfully demonstrated the learning process in a computer identification system called Alpha.

1979-80 – An ANN learns how to recognize visual patterns

Kunihiko Fukushima

A recognized innovator in neural networks, Fukushima is perhaps best known for the creation of Neocognitron, an artificial neural network that learned how to recognize visual patterns. It has been used for handwritten character and other pattern recognition tasks, recommender systems, and even natural language processing.

His work – which was heavily influenced by Hubel and Wiesel – led to the development of the first convolutional neural networks, which are based on the visual cortex organization found in animals. They are variations of multilayer perceptrons designed to use minimal amounts of preprocessing.

1982 – The creation of the Hopfield Networks

John Hopfield

In 1982, Hopfield created and popularized the system that now bears his name.

Hopfield Networks are a recurrent neural network that serve as a content-addressable memory system, and they remain a popular implementation tool for deep learning in the 21st century.

1985 – A program learns to pronounce English words

Terry Sejnowski

Computational neuroscientist Terry Sejnowski used his understanding of the learning process to create NETtalk in 1985.

The program learned how to pronounce English words in much the same way a child does, and was able to improve over time while converting text to speech.

1986 – Improvements in shape recognition and word prediction

David Rumelhart, Geoffrey Hinton, and Ronald J. Williams

In a 1986 paper entitled “Learning Representations by Back-propagating Errors,” Rumelhart, Hinton, and Williams described in greater detail the process of backpropagation.

They showed how it could vastly improve the existing neural networks for many tasks such as shape recognition, word prediction, and more.

Despite some setbacks after that initial success, Hinton kept at his research during the Second AI Winter to reach new levels of success and acclaim. He is considered by many in the field to be the godfather of deep learning.

1989 – Machines read handwritten digits

Yann LeCun

LeCun – another rock star in the AI and DL universe – combined convolutional neural networks (which he was instrumental in developing) with recent backpropagation theories to read handwritten digits in 1989.

His system was eventually used to read handwritten checks and zip codes by NCR and other companies, processing anywhere from 10-20% of cashed checks in the United States in the late 90s and early 2000s.

1989 – Q-learning

Christopher Watkins

Watkins published his PhD thesis – “Learning from Delayed Rewards” – in 1989. In it, he introduced the concept of Q-learning, which greatly improves the practicality and feasibility of reinforcement learning in machines.

This new algorithm suggested it was possible to learn optimal control directly without modelling the transition probabilities or expected rewards of the Markov Decision Process.

1993 – A ‘very deep learning’ task is solved

Jürgen Schmidhuber

German computer scientist Schmidhuber solved a “very deep learning” task in 1993 that required more than 1,000 layers in the recurrent neural network.

It was a huge leap forward in the complexity and ability of neural networks.

1995 – Support vector machines

Corinna Cortes and Vladimir Vapnik

Support vector machines – or SVMs – have been around since the 1960s, tweaked and refined by many over the decades.

The current standard model was designed by Cortes and Vapnik in 1993 and presented in 1995.

A SVM is basically a system for recognizing and mapping similar data, and can be used in text categorization, handwritten character recognition, and image classification as it relates to machine learning and deep learning.

1997 – Long short-term memory was proposed

Jürgen Schmidhuber and Sepp Hochreiter

A recurrent neural network framework, long short-term memory (LSTM) was proposed by Schmidhuber and Hochreiter in 1997.

They improve both the efficiency and practicality of recurrent neural networks by eliminating the long-term dependency problem (when necessary information is located too far “back” in the RNN and gets “lost”). LSTM networks can “remember” that information for a longer period of time.

Refined over time, LSTM networks are widely used in DL circles, and Google recently implemented it into its speech-recognition software for Android-powered smartphones.

1998 – Gradient-based learning

Yann LeCun

LeCun was instrumental in yet another advancement in the field of deep learning when he published his “Gradient-Based Learning Applied to Document Recognition” paper in 1998.

The Stochastic gradient descent algorithm (aka gradient-based learning) combined with the backpropagation algorithm is the preferred and increasingly successful approach to deep learning.

2009 – Launch of ImageNet

Fei-Fei Li

A professor and head of the Artificial Intelligence Lab at Stanford University, Fei-Fei Li launched ImageNet in 2009.

As of 2017, it’s a very large and free database of more than 14 million (14,197,122 at last count) labeled images available to researchers, educators, and students.

Labeled data – such as these images – are needed to “train” neural nets in supervised learning.

Images are labeled and organized according to Wordnet, a lexical database of English words – nouns, verbs, adverbs, and adjectives – sorted by groups of synonyms called synsets.

2011 – Creation of AlexNet

Alex Krizhevsky

Between 2011 and 2012, Alex Krizhevsky won several international machine and deep learning competitions with his creation AlexNet, a convolutional neural network.

AlexNet built off and improved upon LeNet5 (built by Yann LeCun years earlier). It initially contained only eight layers – five convolutional followed by three fully connected layers – and strengthened the speed and dropout using rectified linear units.

Its success kicked off a convolutional neural network renaissance in the deep learning community.

2012 – The Cat Experiment

It may sound cute and insignificant, but the so-called “Cat Experiment” was a major step forward.

Using a neural network spread over thousands of computers, the team presented 10,000,000 unlabeled images – randomly taken from YouTube – to the system and allowed it to run analyses on the data.

When this unsupervised learning session was complete, the program had taught itself to identify and recognize cats, performing nearly 70% better than previous attempts at unsupervised learning.

It wasn’t perfect, though. The network recognized only about 15% of the presented objects. That said, it was yet another baby step towards genuine AI.

2014 – DeepFace

Monster platforms are often the first thinking outside the box, and none is bigger than Facebook.

Developed and released to the world in 2014, the social media behemoth’s deep learning system – nicknamed DeepFace – uses neural networks to identify faces with 97.35% accuracy. That’s an improvement of 27% over previous efforts, and a figure that rivals that of humans (which is reported to be 97.5%).

Google Photos uses a similar program.

2014 – Generative Adversarial Networks (GAN)

Introduced in 2014 by a team of researchers lead by Ian Goodfellow, an authority no less than Yann LeCun himself had this to say about GANs:

They’re kind of a big deal.

Generative adversarial networks enable models to tackle unsupervised learning, which is more or less the end goal in the artificial intelligence community.

Essentially, a GAN uses two competing networks: the first takes in data and attempts to create indistinguishable samples, while the second receives both the data and created samples, and must determine if each data point is genuine or generated.

Learning simultaneously, the networks compete against one another and push each other to get “smarter” faster.

2016 - Powerful machine learning products

Cray Inc., as well as many other businesses like it, are now able to offer powerful machine and deep learning products and solutions.

Using Microsoft’s neural-network software on its XC50 supercomputers with 1,000 Nvidia Tesla P100 graphic processing units, they can perform deep learning tasks on data in a fraction of the time they used to take – hours instead of days

2017 - The Transformer Revolution

Google’s paper “Attention Is All You Need” introduced the Transformer architecture, replacing recurrent networks with self-attention mechanisms.

This innovation became the foundation for nearly all modern language models.

It also spurred a paradigm shift from narrow, task-specific neural nets toward large, generalizable architectures capable of scaling with data and compute.

2018 - Pretraining and Transfer Learning Go Mainstream

The arrival of BERT (Bidirectional Encoder Representations from Transformers) by Google changed NLP forever.

Rather than training from scratch, researchers began pretraining massive models on large text corpora, then fine-tuning them for specific tasks.

This “transfer learning” strategy drastically reduced data requirements and opened the door to general-purpose language understanding.

Meanwhile, models like GPT-2 and XLNet expanded the frontier of unsupervised text generation.

2019 - Scaling Up: Bigger Models, Bigger Compute

Deep learning entered the era of scale.

Frameworks such as TensorFlow 2.0 and PyTorch 1.x standardized APIs for large-model training, while specialized hardware (GPUs, TPUs, and cloud clusters) became widespread.

Parameter counts soared from millions to billions, sparking the “bigger is better” philosophy in research.

At the same time, attention turned to compute efficiency and environmental impact as training costs exploded.

2020 - AI for Science: AlphaFold and the Pandemic Push

AlphaFold 2 from DeepMind solved the decades-old protein-folding problem, proving deep learning’s power in scientific discovery.

The COVID-19 pandemic accelerated AI use in healthcare - diagnostics, molecular modeling, and medical imaging.

Remote-work tools and automation heavily integrated ML for productivity, speech recognition, and translation.

Deep learning became essential infrastructure rather than an experimental technology.

2021 - The Dawn of Generative AI

A new wave of creativity began.

DALL-E, CLIP, and Diffusion Models introduced text-to-image generation, while GPT-3 popularized large-scale text generation for chatbots, writing, and coding assistance.

The line between creative and analytical AI blurred.

“Multimodality” - systems that process both language and images emerged as the next frontier.

This year marked the cultural turning point where AI started to generate, not just recognize.

2022 - The Generative Boom and Foundation Models

The term “foundation models” entered mainstream AI vocabulary, describing gigantic pre-trained architectures like GPT-3, PaLM, and Stable Diffusion.

These models could be adapted to thousands of downstream tasks, ushering in a general-purpose era.

Diffusion models achieved photorealistic image synthesis, open-source AI communities thrived, and global excitement over generative AI soared.

Industry and academia began grappling with ethics, safety, and bias in large-scale AI.

2023 - The Chatbot Revolution and Everyday AI

Generative AI became consumer-facing.

ChatGPT, Claude, and Bard (later Gemini) introduced natural conversation with LLMs to the public.

Businesses rapidly adopted generative AI for writing, coding, data analysis, and design.

The open-source movement gained speed (e.g., LLaMA, Mistral), democratizing access to frontier models.

AI governance, hallucination control, and data transparency became central research themes.

2024 - Multimodality and Long-Context Intelligence

Deep learning models expanded beyond text.

New architectures like GPT-4o, Gemini 1.5, and Claude 3 Opus integrated text, images, audio, and video seamlessly.

Long-context models could handle documents with millions of tokens, enabling reasoning across books, datasets, and multimodal interactions.

AI became embedded in productivity suites, operating systems, and creative tools - shifting from novelty to ubiquity. With AI tools it becomes easier to track the market no matter if this is in retail or hospitality.

2025 - Efficiency, Alignment, and the Maturity of Deep Learning

By 2025, deep learning has entered its maturity phase.

Focus shifts from scaling size to scaling efficiency - through techniques like sparse attention, quantization, retrieval-augmented generation, and modular training.

Research emphasizes alignment, interpretability, and human-AI collaboration, not just raw capability.

AI systems act as copilots in science, engineering, and creativity. Data scraping becomes easier with tools like import.io.

As Ekundayo et al. (2025) describe, the field now stands at a “post-growth” moment: deep learning is no longer emerging - it’s everywhere.

Fun and Games

It may not be saving the world, but any history of machine learning and deep learning would be remiss if it didn’t mention some of the key achievements over the years as they relate to games and competing against human beings:

- 1992: Gerald Tesauro develops TD-Gammon, a computer program that used an artificial neural network to learn how to play backgammon.

- 1997: Deep Blue – designed by IBM – beat chess grandmaster Garry Kasparov in a six-game series.

- 2011: Watson – a question answering system developed by IBM – competed on Jeopardy! against Ken Jennings and Brad Rutter. Using a combination of machine learning, natural language processing, and information retrieval techniques, Watson was able to win the competition over the course of three matches

- 2016: Google’s AlphaGo program beat Lee Sedol of Korea, a top-ranked international Go player. Developed by DeepMind, AlphaGo uses machine learning and tree search techniques. The program is scheduled to face off against current #1 ranked player Ke Jie of China in May 2017.

- 2017: AlphaGo Zero learns entirely from self-play, surpassing all previous Go programs. AlphaZero soon follows, mastering chess and shogi without human input.

- 2018 - 2019: OpenAI Five achieves professional-level play in Dota 2, while AlphaStar reaches Grandmaster rank in StarCraft II, proving deep learning’s power in complex real-time strategy.

- 2020: MuZero learns to play chess, shogi, and Atari without being told the rules, showing true model-based learning.

- 2021 – 2022: DeepMind’s Player of Games and Meta’s CICERO blend strategy, negotiation, and natural language, mastering both poker and Diplomacy.

- 2023 – 2025: AI becomes a creative collaborator. Game studios use large models to generate dialogue, levels, and adaptive NPCs that learn from players — shifting the focus from competition to co-creation.

A Rough Timeline

There have been a lot of developments and advancements in the AI, ML, and DL fields over the past 60 years. To discuss every one of them would fill a book, let alone a blog post.

To boil it down to a rough timeline, deep learning might look something like this:

- 1960s: Shallow neural networks

- 1960-70s: Backpropagation emerges

- 1974-80: First AI Winter

- 1980s: Convolution emerges

- 1987-93: Second AI Winter

- 1990s: Unsupervised deep learning

- 1990s-2000s: Supervised deep learning back en vogue

- 2006-201s: Modern deep learning

- 2016-2025: Large-scale deep learning, foundation models, multimodality, generative AI, deployment & impact

Today, deep learning is present in our lives in ways we may not even consider: Google’s voice and image recognition, Netflix and Amazon’s recommendation engines, Apple’s Siri, automatic email and text replies, chatbots, and more. With data all around us, there’s more information for these programs to analyze and improve upon.

It’s all around you. Where will deep learning head next? Where will it take us? It’s hard to say. The field continues to evolve, and the next major breakthrough may be just around the corner, or not for years. There is no set timeline for something so complex.

One thing is for certain, though. It’s a very exciting time to be alive…to witness the blending of true intelligence and machines. Machine learning history shows us that the future is here in many ways.

Recommended Reading

Python Web Scraping: What Are The Pros and Cons

Data Mining vs. Machine Learning: What’s The Difference?

Understanding the Importance of Data: Why Data is Crucial for Business and Society